Human-in-the-Loop Was Never a Design Spec. It Was a Prayer.

“Keep a human in the loop” has become enterprise AI’s default safety answer. New research suggests that without a real design behind it, that answer may be making oversight worse, not better.

Last week, I wrote about how AI interfaces can train users to stop thinking, about the way the design of those systems, at the level of the individual screen, can quietly erode the kind of critical engagement that makes human judgment worth having in the first place. If you haven’t read that piece yet, I’d suggest starting there. What I want to explore here is the same failure, but one layer up. The organizational equivalent of that design mistake is not a poorly configured chat interface but a phrase that has somehow graduated from design principle to risk management strategy. That phrase is “keep a human in the loop,” and it is, in most enterprise deployments I’ve observed, a prayer disguised as a policy.

The phrase entered enterprise vocabulary as a reasonable shorthand for a constraint: when AI systems make decisions with consequential outcomes, a human should be able to review and override. That’s sensible. What’s less sensible is how rarely it comes attached to a specification. “Human-in-the-loop” gets invoked in compliance reviews and vendor evaluations and board presentations, and it almost never comes with answers to the questions that actually matter:

The answers, when they exist at all, tend to be improvised after deployment rather than designed before it.

The most unsettling data point I’ve encountered on this topic comes from a Harvard Business School working paper by Jacqueline Lane and colleagues (HBS Working Paper 25-001). In a controlled experiment with 228 evaluators making over 3,000 screening decisions, the researchers tested whether giving evaluators AI-generated explanations alongside AI recommendations (so-called “narrative AI,” as distinct from black-box AI) improved the quality of human oversight. The intuition seems sound: if reviewers understand why the AI made a recommendation, they should be better equipped to push back when the AI is wrong.

The actual finding is almost perfectly backward. Evaluators given AI explanations were 19 percentage points more likely to simply align with the AI’s recommendation than those given no explanation at all. Narrative AI did not improve decision quality over black-box AI; the explanations functioned as cognitive shortcuts that made evaluators more deferential, not less. The damage was not symmetric, either: narrative AI increased rejection of top-tier ideas when the AI opposed them, which means the oversight mechanism was not just ineffective but was actively making the worst outcomes more likely. So I suggest that if you haven’t defined what the human is supposed to be evaluating before deployment, adding a human to the loop doesn’t add oversight; it adds a rubber stamp with a salary.

If you haven’t defined what the human is supposed to be evaluating before deployment, adding a human to the loop doesn’t add oversight; it adds a rubber stamp with a salary.

This is the oversight paradox. The design intervention intended to make human review more meaningful made it less meaningful, because nobody had defined what the reviewing was actually supposed to accomplish.

The HBS finding doesn’t stand alone. A 2024 paper in the Journal of Responsible Technology (Bley et al.) asked a more fundamental question: is effective human oversight of AI systems still possible at scale? Their answer identifies three converging barriers that compound each other in ways that make improvised oversight untenable.

Researchers at Harvard Business Review have put a name to the second of these: “AI brain fry,” defined as “mental fatigue from excessive use or oversight of AI tools beyond one’s cognitive capacity.” The most mentally taxing form of AI engagement, they found, was oversight specifically, with high levels of AI oversight predicting 12% more mental fatigue. Workers experiencing brain fry reported 33% more decision fatigue than those who did not, which, if you think about it, means the review quality your oversight model depends on degrades in direct proportion to how much your reviewers are being asked to review.

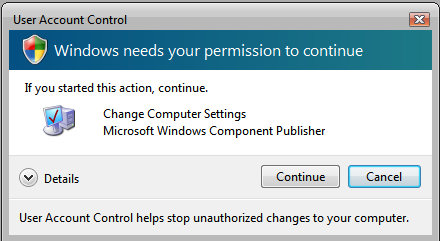

If you need a historical precedent for what happens when you ask humans to approve too many things too often, consider Windows Vista. Microsoft, at the height of virus and malware concerns, decided the solution was to ask users for explicit confirmation before allowing virtually anything to execute. The result was not the safety net they intended; it was a nag that trained users to click “Allow” reflexively, without reading, because the cognitive cost of actually evaluating each prompt exceeded anyone’s patience. The mechanism designed to protect users almost certainly let harmful actions through, because fatigue had turned oversight into a reflex. Microsoft learned from this and fundamentally changed their approach in later versions of Windows. The parallel to enterprise AI oversight is instructive: if your human-in-the-loop process asks reviewers to evaluate everything, it is, over time, training them to scrutinize nothing.

None of these barriers are immovable, but all of them are addressable through design and requirements work that happens before deployment, not after. If you know that prolonged monitoring produces fatigue, that’s a specification: define the review scope, the escalation criteria, the decision authority before the system goes live. If you know the skills gap is real, that’s a system design constraint: the AI should produce outputs that available reviewers can actually evaluate, and the interface should surface what matters rather than everything at once. What the research reveals is not that human oversight is impossible; it’s that oversight without design is not oversight. It’s exposure.

Generative AI is inherently probabilistic. That’s not a flaw; it’s the nature of how these systems produce outputs, and in most applications it’s appropriate and valuable. What’s less appropriate is treating human-in-the-loop review as a reliable catch for everything that falls outside an acceptable risk window, because what the research consistently shows is that humans under cognitive load don’t become more critical of AI outputs; they become more deferential to them.

NIST names this directly. Section 2.7 of AI 600-1, the federal AI risk management framework for generative AI, identifies it as a risk that “over time, humans may over-rely on GAI systems or may unjustifiably perceive GAI content to be of higher quality than that produced by other sources,” what the document explicitly calls “automation bias, or excessive deference to automated systems.” Section 2.2 on confabulation reinforces the problem: confabulated outputs are particularly dangerous precisely because of “the confident nature of the response,” which leads users to act on false information rather than question it. The outputs hardest for humans to catch are the ones that look right.

The EU AI Act, which begins enforcement on August 2, 2026, mandates human oversight for high-risk AI systems, and compliance guidance from Orrick makes clear that effective governance requires “people with diverse profiles from different parts of the company” contributing to AI frameworks. The regulation requires oversight. It does not specify what effective oversight looks like. That specification is, in most organizations, the work nobody is doing.

The regulation requires oversight. It does not specify what effective oversight looks like. That specification is, in most organizations, the work nobody is doing.

The compound effect is what should concern enterprise leaders. You have AI systems producing confident-sounding outputs across a wide probabilistic range. You have human reviewers operating under cognitive load, drifting toward deference as oversight volume increases. And you have the HBS finding sitting underneath all of it, showing that the design interventions intended to help reviewers exercise judgment are, absent a clear definition of what that judgment should accomplish, more likely to suppress it. This is not a technology failure; it’s the predictable consequence of treating “keep a human in the loop” as a destination rather than a starting point.

There is a version of this that works, and it starts with a different question. Instead of “how do we add a human review step?” the question should be “what does meaningful oversight actually require, and what does the AI need to do to make that oversight viable rather than exhausting?” That second part matters, because if the AI is producing outputs that routinely require intensive human scrutiny, the oversight burden is a symptom of an upstream design failure, and that is precisely why both UX strategy and AI strategy must be collaborative. You cannot design for cognitive sustainability if the people designing the interaction and the people designing the AI’s behavior are in separate rooms.

A useful place to start, before that broader conversation, is a single question you can ask about any AI deployment your organization is running right now: Have you defined, in writing, what the human reviewer is supposed to evaluate, and the criteria they should apply? If the answer is no, the gap is identified. The next piece in this series takes it from there.

Matt leads Planorama Design, a product acceleration firm for enterprise software teams. With nearly 30 years of engineering experience, he helps CTOs and VPs of Engineering structure requirements, validate AI feasibility, and ship better software faster.

Most AI interfaces are designed to deliver output, not to help users evaluate it. If your product presents AI-generated content without prompting users to engage critically, the interface itself is the problem.

AI-generated information can actually threaten your users' productivity. Thoughtful design of AI-enabled features can boost it instead.